The Next Computing Model Looks a Lot Like the Old One

I just finished reading an article over at Gigaom about ‘the death of the Web’ (here) and how we will very soon be leaving the Web-based computing model based on a browser (such as Internet Explorer, FireFox, or Chrome) behind.

It seems that the Web-based computing model is soon to be replaced with the ‘App’ model.

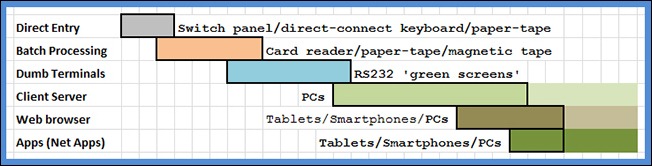

As a bit of background I took the time to craft up the following diagram in which I have tried to capture the main computing models since the first real computer.

This very simple diagram whipped together in Excel—I left the Excel grid lines in the diagram on purpose because I think they give it a more professional look—shows the six broad user-interface computing models.

Direct Entry: The first computing model is what I have termed ‘Direct Entry’ whereby the person using the computer basically had to be sitting in front of the main computing device and it was interfaced via a switching panel and/or a directly connecting keyboard of some type (not necessarily a common QWERTY keyboard) and/or possibly by a paper-tape reader.

The output from this kind of computing model may have been a single row of digits on numerical vacuum-tube digit display or a small amount of printed output on a teletype roll-paper printer.

Batch Processing: The second computing model to come along was the “Batch Processing” model whereby the computer was loaded and operated primarily using a card reader and/or paper-tape and/or magnetic tape.

These computers generally produced reams of printed ‘fanfold’ output using hammer printers. This output then had to be boxed up and sent off to all the business departments that were waiting for it.

Dumb Terminals: After batch processing came “Dump Terminals”. This model of computing is sometimes called “green screen” or remote entry computing, and I have heard it referred to as RS232 computing. The back-end computers were typically referred to as mainframe computers. Real mainframe computers were water cooled and were the size of the average bedroom and needed specially trained operating personnel to keep them humming along.

The dumb terminal computing model was the first model that allowed for the ‘remote’ usage of computers even though remote typically meant from within the same building that housed the mainframe or within the campus. Dumb terminals were so called because they could only display mono-spaced single colour text (typically green on black but amber on black and white on black was also common) or very basic line-drawing graphics (actually comprised using line-drawing characters so they were not really graphics).

The bulk of the output produced by this computing model was still printed on ‘fanfold’ but the printers were now incredibly fast and used one or more high-speed rotating chains to do the printing—hence these printers were called chain printers. CAD/CAM/CAE drawings are usually printed using either large flat-bed single-colour-at-a-time plotters or roll plotters—the operators have to change the pen colour manually as and when required.

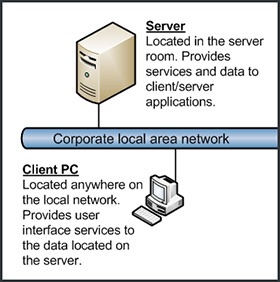

Because of the awesome processing power provided by a PC (compared to a dumb terminal) it was able to do a serious amount of computing itself without relying on the mainframe. Additionally PCs could display true graphical output. This lead to computer systems where the user-interface compute intensive work was done by the client PC and the PC only went off to the mainframe server to either update data in the databases or to get more data to display. Hence this model was dubbed client/server.

By and large in business this model is still by far the dominate computing model in use. The world’s largest and most used business applications, such as SAP, employ this model.

With this model the output is generally used in real-time directly off-screen or printed via cut-sheet laser printers (on Letter size paper in the US and on A4 size paper everywhere else). In the case of engineering and CAD/CAM/CAE drawings large-format multi-colour wet-ink roll printers are used.

Web browser: When it first started to come about the Web browser computing model almost attained miracle status. The theory was that Web-based applications would not need anything installed on the client computers in order for them to work (apart from a compliant browser); they were instinctive and intuitive to use so no user training was required (because, after all, they just used a browser to make them work and everyone knew how to use a browser); they were self documenting (which is code for they didn’t need to be documented); they were easy to develop (make) because they just used standard Internet technologies; they were easy to deploy as nothing needed ‘installing’ or maintaining on the client PC; and they were (so it seemed) damn near maintenance free.

Basically Web-based applications solved all the big issues associated with client/server computing—apparently.

Sadly, in the end, almost none of this ended up being true. In the business world Web-based applications tend to need all kinds of little bits and bobs deployed to the client computers to make them work as required, plus they usually need very specific versions of the browser to be used. But that is a whole other story and would take up way too much space in this posting to explain.

Output is the same as for client/server.

So what happened was that a whole new type of computer application was invented. These new ‘apps’ used the Internet to access the back-end (or what might be considered the ‘server’ part) and had a new small-footprint mini-application at the front-end (or what might be considered the ‘client’ part).

Individual ‘apps’ tend to be basic with very simple interfaces so that they can be used and operated on the smaller screens of mobile computing devices.

As you can see from my very quickly constructed picture at the bottom there is still the client (please excuse my dorky smartphone picture but I only have Visio 2007 and this is the best it has in it for a smartphone) and at the top there are still the servers. What has changed compared to the classic client/server model are:

- The client is not a PC (but it could be and likely soon will be).

- The client has to use the Internet to get to the network that the servers are connected to (which in the scenario shown are the servers used by TWiT).

Hence the mobile device ‘app’ is basically a re-invention of the client/server computing model but with the Internet providing the server functionality and the mobile device reduced functionality mini-application is the client component.

For example an ‘app’ might only provide access to one newspaper, like the New York Times digital subscriber app. The New York Times app, as you might expect, simply provides you with access to the New York Times and nothing else. Similarly the Twitter ‘app’ only provides access to Twitter, and the TWiT ‘app’ only provides access to TWiT (This Week in Tech).

The Point Being …

The point of all this is that the ‘app' based computing model that is getting all the headlines lately is very much like the old client/server model except that apps:

- Go via the Internet to make the required connection to the server(s).

- Tend to have much less functionality per app than your typical client/server client application.

The other thing with ‘apps’ when compared to Web browsing is that they have to be downloaded and installed. This means that, if the developer of the ‘app’ wants to, they can charge you money for it. Hence ‘apps’ provide a new and better ways for developers and companies who provide ‘app’ services to make money.

At this stage ‘apps’ are more or less confined to mobile computing devices such as smartphones and Tablet PCs. But this is going to change very soon when Windows 8 for PCs is released. Windows 8 is designed to work using the ‘apps’ model so those ‘apps’ that you use on your mobile devices will start to become available for Windows 8 PCs.

Apparently this is a good thing … but I am yet to be convinced … ...